You might have heard about containers recently – the hot topic and buzz word in the cloud computing industry. But what exactly are they and how can they add value to your business and existing cloud infrastructure, I hear you ask?

Containers

It’s first useful to first look at Virtual Machines (VMs) to understand containers and why their need has arisen.

Containers and VMs have similar goals: to isolate an application and its dependencies into a self-contained unit that can run anywhere.

Both containers and VMs remove the need for physical hardware, enabling efficiency in terms of energy consumption and cost effectiveness.

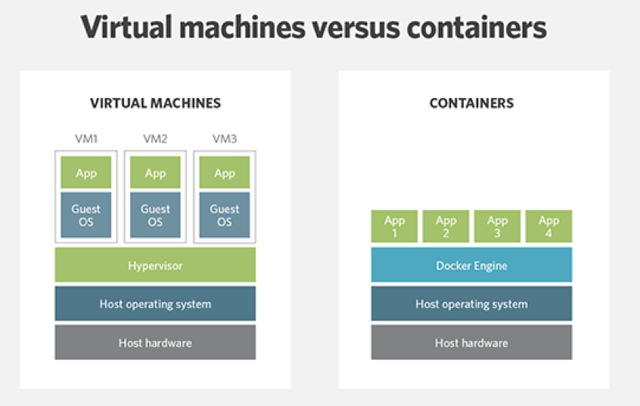

But, the main difference between containers and VMs is the architectural approach. A VM is essentially an emulation of a real computer that executes programmes like a real computer. VMs run on top of a physical machine using a hypervisor which runs on either a host machine or on “bare-metal”.

At the hosted private cloud and hyperscale public cloud level, when you are talking thousands of VMs, many of which that have workloads that have been shifted away from on-premises, you’re bound to run into scalability issues.

The long term solution to VM sprawl is containers. While a VM provides hardware virtualisation, a container provides OS virtualisation by abstracting the “user space”. The difference is that containers share the host system’s kernel with other containers. An entire stack of containers, whether it be dozens or hundreds or even thousands are able to run on top of a single instance of the host operating system, in a tiny fraction of a footprint of a comparable VM running the same application. Additional containers can be created in microseconds versus minutes for VMs.

The diagram below demonstrates that each container gets its own isolated user space to allow multiple containers to run on a single host machine. We can see that all the operating system level architecture is being shared across containers.

Containers allow application developers and system administrators to package, ship and run distributed application components with guaranteed platform parity across different environments.

So, there’s potential for container-based virtualisation to create a broadly portable cloud environment. It requires fewer resources, and a more scalable and flexible virtualisation layer than VMs.

Where does Docker come in?

Docker is the containerisation technology. It provides a way to “package” complex applications and upload them to public repositories and then download them into public or private clouds running Docker hosts – this is similar to how apps are downloaded from the App store to your smartphone.

Container offerings like Docker are "democratising" virtualisation by providing it to developers in a usable, application-focused form. Whereas access to virtual machine virtualisation tends to be provided through, and governed by, gatekeepers in infrastructure and operations, Docker is being adopted from the ground up by developers using a DevOps approach.

As our reliance on VMs decreases, containers will provide for much higher compute densities resulting in an overall decrease in the cost of cloud computing.

So, as Docker and its related container technologies continue to mature, you should consider re-architecting or modernising your apps for containerisation for use in public and private clouds. In my opinion, introducing containers to your infrastructure will benefit your organisation in a number of ways. Their portability, both internally and in the cloud, coupled with their low cost makes them a great alternative to full-blown virtual machines.

If you want to chat further about containers or find out more about nine.ch and how we can help you, visit our website www.nine.ch or get in touch.

We would be pleased to count you among our readers soon. Let us know if you have any questions, comments, suggestions or wishes - we are looking forward to your input!